Automatic Detection of Auditory, Visual and Physiological Parameters for the Diagnosis

of Affective Disorders

EU Funded Project Affective Mind

Project Justification

Mental illnesses are among the most prevalent health conditions worldwide. In Germany, approximately one in two to three adults will experience a mental disorder at some point in their lives. According to data from statutory health insurance providers, mental illnesses represent the leading cause of long-term incapacity to work. Nearly half of these diagnoses fall within the category of affective disorders, such as depression.

Previous research has indicated that individuals with affective disorders differ from mentally healthy individuals not only in their psychological health status but also in various behavioral and physiological characteristics – including vocal features, facial expressions, and biosignals. Despite their diagnostic relevance, these non-verbal and physiological indicators are not yet systematically incorporated into current clinical diagnostic practices. However, they hold great potential for the future development of objective, data-driven tools to support early detection, differential diagnosis, and monitoring of mental disorders.

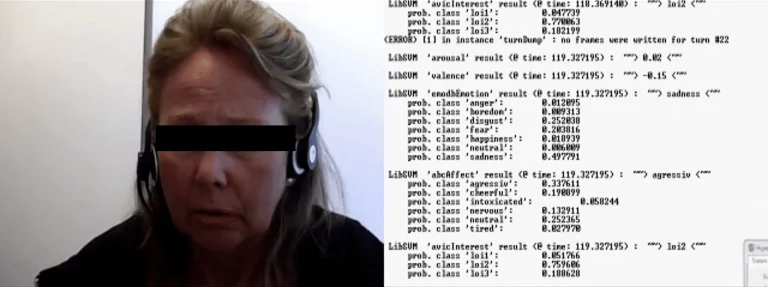

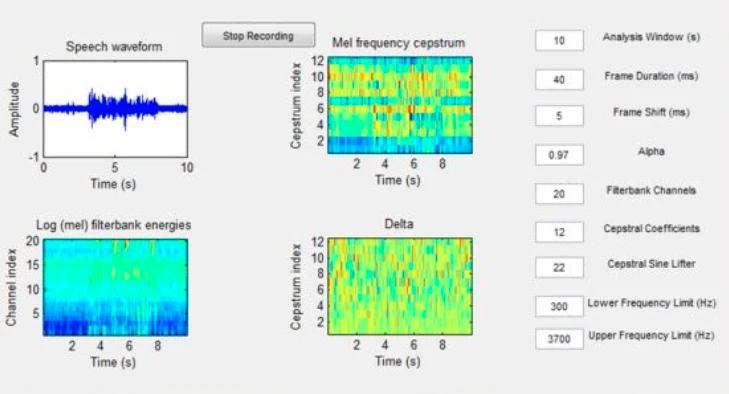

Automatic Speech Feature Extraction. Input: Real time PC-Speaker Audio. Processing (a) Extraction of Mel Frequency Cepstral Coefficients (MFCC). Output (b): BDI-Depression Score.

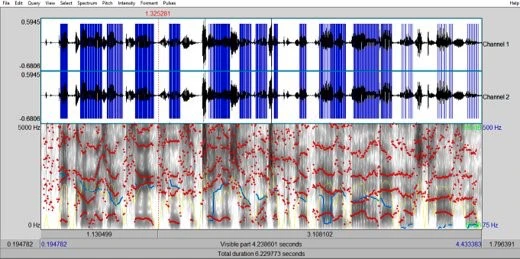

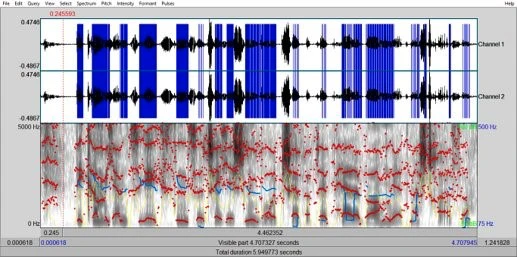

Depression Symptoms (a) Low vs. (b) High Energy Sample

Our Approach

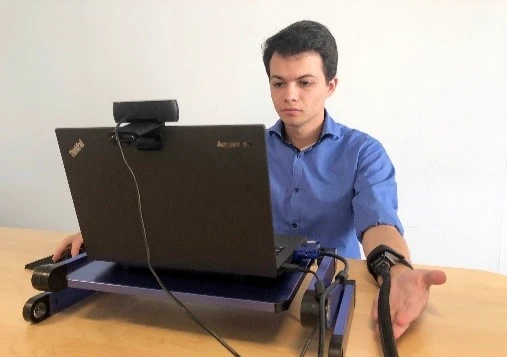

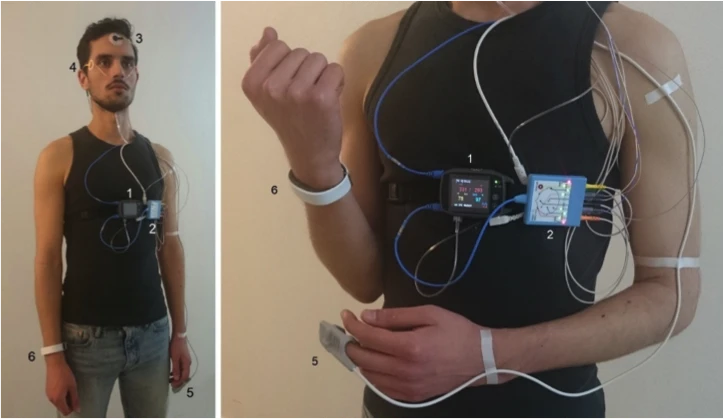

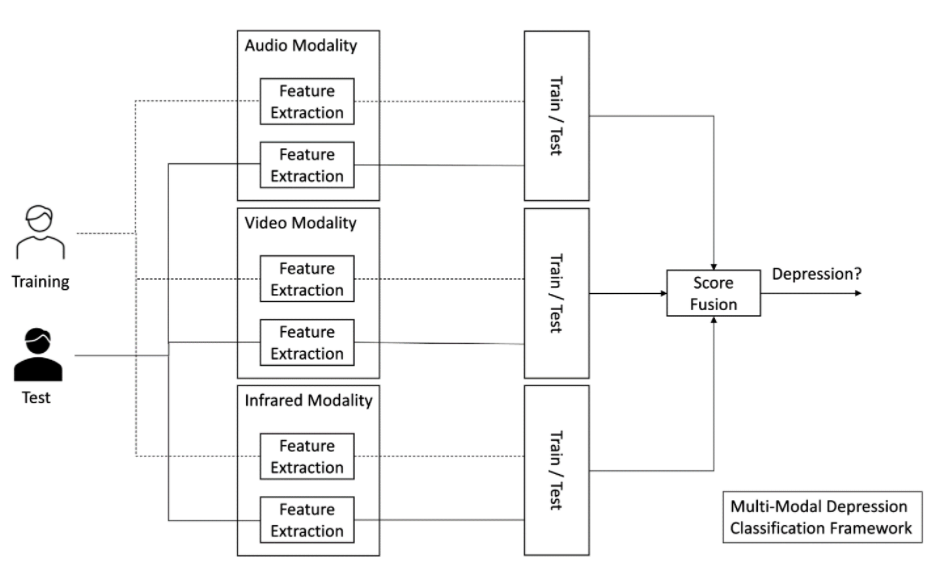

The EU-funded project Affective Mind aims to develop an automated system for the detection of affective disorders based on multimodal data analysis. The core objective is to identify and interpret auditory, visual, and physiological parameters that differentiate individuals with affective disorders from mentally healthy individuals.

To achieve this, the system will be trained using data collected from clinical populations diagnosed with affective disorders, alongside a control group of healthy participants. Key features – such as vocal patterns, facial expressions, and physiological signals – will be systematically analyzed to extract discriminative markers.

Subsequently, the system will be validated using an independent test sample to evaluate its predictive accuracy and diagnostic reliability. By combining state-of-the-art technologies in affective computing and psychophysiology, Affective Mind seeks to lay the groundwork for objective, non-invasive, and scalable diagnostic tools in mental health care.

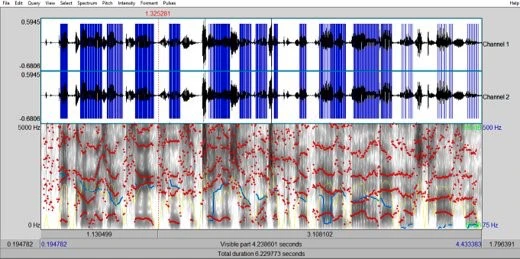

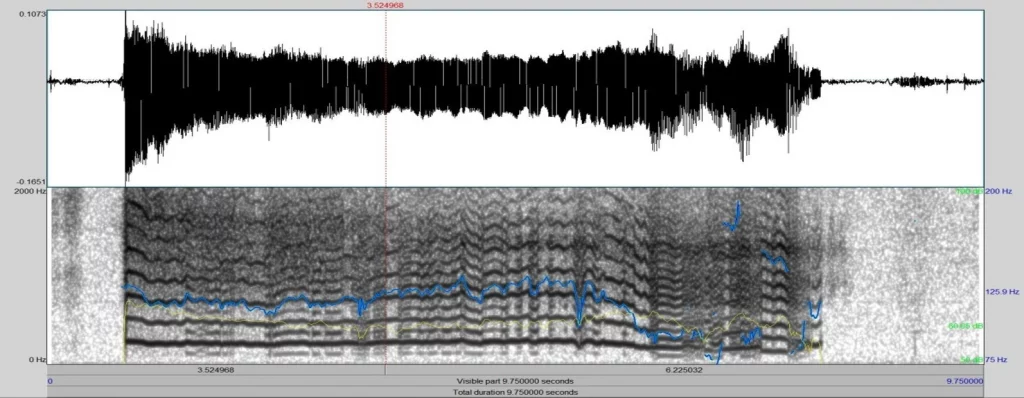

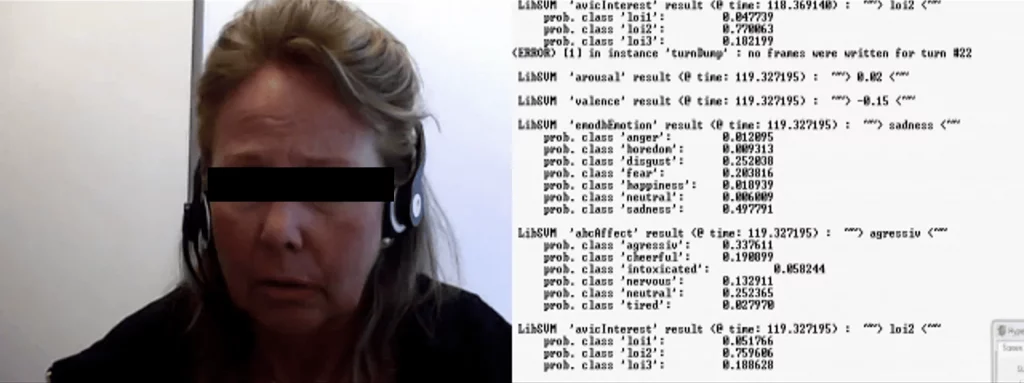

Example of extraction of key figures from time-frequency diagrams of the speech signal. Shown as horizontal bars are the resonance frequencies of the vocal tract associated with jaw opening, horizontal tongue position and vocal tract tension. Vocal tract parameters provide, among other things, information on depression-associated speaker states such as sadness, fatigue depression states and comorbid anxiety states.

Work Packages, Insights and Outcomes

Within the Affective Mind project, IXP is responsible for several core components contributing to the development of the diagnostic system. These include the specification and selection of diagnostically relevant features, particularly in the auditory domain, as well as the systematic preparation, processing, and analysis of speech and vocal data.

To ensure robust and meaningful insights, IXP applies state-of-the-art feature extraction methods rooted in speech science, signal processing, and affective computing. The resulting prototype system has undergone initial evaluations for user acceptability, focusing on its usability, perceived relevance, and integration potential in clinical contexts.

Overview of the Multi-Modal Depression Classification Framework

Demonstrator Prototype: Sadness Detection via Speech

Successful Projects

Development of a screening and support portal as an extensive psycho-social diagnostic mode for refugees

Features extraction of auditory, visual, and physiological data for diagnosis system of affective disorders

A holistic view of interrelated frailties to reduce frailty risk by improving overall well-being

Feedback-assisted rehabilitation after surgery of the anterior cruciate ligament

A contribution of German elite sport to smart health promotion

Market overview, legal and technical requirement analysis, living lab study, and implementation of corporate health management programs

Desktop and virtual reality-supported module variants to bridge waiting times between therapy sessions and enrich ambulant therapy

Support of acute therapy and relapse prevention in the deep psychological treatment